Unable to determine the shape of numpy array in loop containing transpose operation

I’ve been trying to create a small neural network to learn softmax functions, article from the following site: https://mlxai.github.io/2017/01/09/implementing-softmax-classifier-with-vectorized-operations.html

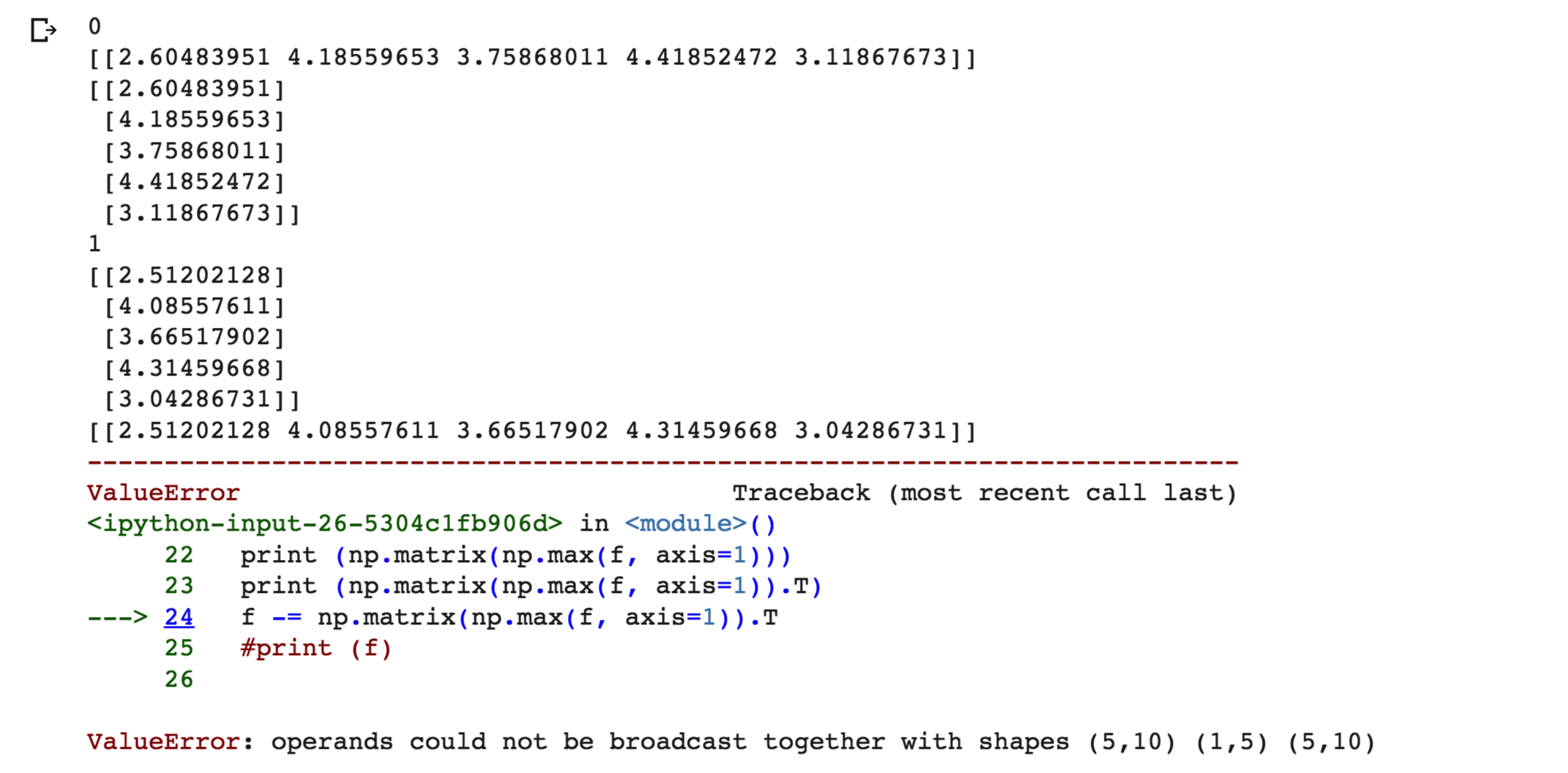

It is suitable for a single iteration. However, when I create a loop to train a network with updated weights, I get the following error: ValueError: Operand cannot be broadcast with shape (5,10) (1,5) (5,10). I have attached a screenshot of the output here.

Debugging this issue, I found that np.max() returns arrays of shapes (5,1) and (1,5) in different iterations, even though the axes are set to 1. Please help me determine what the following code is wrong.

import numpy as np

N = 5

D = 10

C = 10

W = np.random.rand(D,C)

X = np.random.randint(255, size = (N,D))

X = X/255

y = np.random.randint(C, size = (N))

#print (y)

lr = 0.1

for i in range(100):

print (i)

loss = 0.0

dW = np.zeros_like(W)

N = X.shape[0]

C = W.shape[1]

f = X.dot(W)

#print (f)

print (np.matrix(np.max(f, axis=1)))

print (np.matrix(np.max(f, axis=1)). T)

f -= np.matrix(np.max(f, axis=1)). T

#print (f)

term1 = -f[np.arange(N), y]

sum_j = np.sum(np.exp(f), axis=1)

term2 = np.log(sum_j)

loss = term1 + term2

loss /= N

loss += 0.5 * reg * np.sum(W * W)

#print (loss)

coef = np.exp(f) / np.matrix(sum_j). T

coef[np.arange(N),y] -= 1

dW = X.T.dot(coef)

dW /= N

dW += reg*W

W = W - lr*dW

Solution

In your first iteration, W is the instance and shape of np.ndarray (D, C). f inherits ndarray, so when you do np.max(f, axis = 1), it returns an ndarray shape (D,), which np.matrix() transforms into (1, D). Then transpose it to (D, 1).

But in your next iteration, W is an instance of np.matrix (which inherits from W = W - lr*dW in dW). f then inherits np.matrix , and np.max (f, axis = 1) returns the np.matrix shape (D, 1), which is unphased by np.matrix() and becomes shape (1, D) In the . After T

To resolve this issue, make sure you don’t mix np.ndarray with np.matrix.Define everything as np.matrix from the beginning (i.e. W = np.matrix(np.random.rand(D,C)) or use keepdims Maintain your shaft like this:

f -= np.max(f, axis = 1, keepdims = True)

This will allow you to keep all your 2D content without having to convert to np.matrix. (Do this for sum_j as well).